Telepresence

Introduction

Advanced communication and remote collaboration are key requirements of our modern society. Yet, the notion of presence over large distances is relatively little understood from a scientific point of view, and there is a variety of perceptual and technical research challenges that need to be addressed to design truly convincing telepresence systems. Existing practical solutions to remote communication and telecollaboration focus on video conferencing, but are significantly limited. Standard commercial video conferencing systems rely on 2D displays and single video cameras to acquire video streams of the collaborators. These systems do not have the abilities to capture and render all the details required for a seamless immersive experience. To enrich the expressiveness of human communication, we envision solutions that would let us perceive our remote collaborators seamlessly embedded into our own working environment through novel 3D display systems, and acquisition systems that can capture not only video streams, but also other types of information such as 3D geometry. The ideal system is a sensory-rich environment that provides a highly compelling experience that is the epitome of presence including perceptually crucial cues such as eye contact, mimicry, and gestures.

Topics

Displays

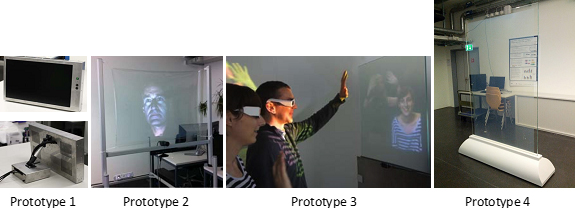

The figure illustrates an evolution of 3D displays which have been designed and developed in the context of the BeingThere Center. The first protoype is a parallax barrier display which is realized by stacking up LCD displays. While the 3D effect on the display can be perceived without special glasses, it is fully opaque. The second prototype is our first transparent 3D display. It is based on a projective foil and requires active shutter glasses. While a vivid 3D effect is achieved with the active shutter glasses, they are impractical due to their weight and size. With prototype 3, we improve this transparent active stereoscopic display by replacing the active shutter glasses with lightweight passive glasses. In this case, the display, a glass panel, embeds a special anisotropic film. Two projectors behind the display project light with different polarization onto the transparent display. Due to the anisotropic properties of the setup, the displayed image appears vivid even in relatively bright light conditions. In addition, immersion is enhanced by adding motion parallax to the binocular cues: Eye-tracking is used to adapt rendered content to the viewer position. Prototype 4 is a lifesize version of Prototype 3. A bidirectional setup consisting of two life-size displays is installed at ETH and NTU.

Gaze Awareness

Effective communication using current video conferencing systems is severely hindered by the lack of eye contact caused by the disparity between the locations of the subject and the camera. While this problem has been partially solved for high-end expensive video conferencing systems, it has not been convincingly solved for consumer-level setups. The method is suitable for a variety of scenarios such as mainstream home video conferencing, as it uses inexpensive consumer hardware, achieves real-time performance.

Acquisition

Multiview reconstruction aims at computing the geometry of a scene observed by a set of cameras. Accurate 3D reconstruction of dynamic scenes is a key component in a large variety of applications, ranging from special effects to telepresence and medical imaging. In this topic we develop methods to robustly and efficiently reconstruct dynamic scenes captured by a set of cameras. Our aim is to achieve, in real-time, spatio-temporal consistency by seamlessly fusing color and geometric information into a coherent representation.

Publications

2016

An Immersive Bidirectional System for Life-size 3D Communication

Proceedings of the 29th International Conference on Computer Animation and Social Agents (CASA) (Geneva, Switzerland, May 23-25, 2016), pp. 89-96

Available files: [PDF] [Video] [BibTeX] [Abstract]

2014

Immersive 3D Telepresence

IEEE Computer, IEEE, vol. 47, no. 7, 2014, pp. 46-52

Available files: [PDF] [BibTeX] [Abstract]

Transparent Stereoscopic Display and Application

Proceedings of SPIE 9011 (San Francisco, USA, February 3-6, 2014), pp. 90110P

Available files: [PDF] [BibTeX] [Abstract]

Vision-based calibration of parallax barrier displays

Proceedings of SPIE 9011 (San Francisco, USA, February 3-6, 2014), pp. 90111D

Available files: [PDF] [BibTeX] [Abstract]

Spatio-Temporal Geometry Fusion for Multiple Hybrid Cameras using Moving Least Squares Surfaces

Proceedings of Eurographics (Strasbourg, France, April 7-11, 2014), Computer Graphics Forum, vol. 33, no. 2, pp. 1-10

Available files: [PDF] [Video] [BibTeX] [Abstract]

2013

Multi-planar plenoptic displays

Journal of the Society for Information Display, Blackwell Publishing Ltd, vol. 21, no. 10, 2013, pp. 451-459

Available files: [BibTeX] [Abstract] [PDF preprint]

Light-Field Approximation Using Basic Display Layer Primitives

SID Symposium Digest of Technical Papers 2013 (Vancouver, Canada, 19-24 May, 2013), pp. 408-411

Available files: [PDF] [BibTeX] [Abstract]

Towards Next Generation 3D Teleconferencing Systems

Proceedings of 3DTV-CON (Zurich, Switzerland, October 15-17, 2012), pp. 1-4

Available files: [PDF] [BibTeX] [Abstract]

2011

FreeCam: A Hybrid Camera System for Interactive Free-Viewpoint Video

Proceedings of Vision, Modeling, and Visualization (VMV) (Berlin, Germany, October 4-6, 2011), pp. 17-24 (2nd Place Best Paper Award)

Available files: [PDF] [Video] [BibTeX] [Abstract]