Project Archive

blue-c-II: Open the Real, Physical World to blue-c Users

Stephan Würmlin (wuermlin

inf.ethz.ch)

inf.ethz.ch)

Michael Waschbüsch (waschbuesch

inf.ethz.ch)

inf.ethz.ch)

Doo-Young Kwon (dkwon

inf.ethz.ch)

inf.ethz.ch)

http://blue-c-ii.ethz.ch

The focus of blue-c-II is to investigate and to develop fundamental methods for interactive, view-independent 2D and 3D video and display technology that is able to acquire ordinary, open, and complex physical environments. As opposed to blue-c-I, which featured a small range of operation confined to objects, blue-c-II research will be devoted to represent scenes in ordinary rooms or offices. Also, whereas blue-c-I displayed prefabricated scenes, blue-c-II will feature on-line interaction with these scenes. Many individual, off-the-shelf hardware components will be dispersed throughout the scene, resulting in "seas of cameras, displays, and compute nodes". Our main goal is to design flexible and computationally efficient methods that interactively process and display the multiple life video streams that emerge from such sources. While blue-c-I was aimed at building the technical setups, blue-c-II will primarily target/focus on theoretical, algorithmic aspects of the representation, compression, streaming, calibration, interaction, control, and management of data emerging from large arrangements, with applications as diverse as surveillance, tele-education, tele-training, and remote product design. As in blue-c-I, we will bring together a multidisciplinary team of experts from computer graphics, computer vision, mechanical and electrical engineering, and architecture.

blue-c: A Spatially Immersive Display and 3D Video Portal for Telepresence

Stephan Würmlin (wuermlin

inf.ethz.ch)

inf.ethz.ch)

Edouard Lamboray (lamboray

inf.ethz.ch)

inf.ethz.ch)

Martin Naef (naef

inf.ethz.ch>)

inf.ethz.ch>)

http://blue-c.ethz.ch

blue-c created a novel hard- and software system that successfully combined the advantages of a CAVE™-like projection environment with simultaneous and real-time 3D video capturing and processing of the user. As a major technical achievement, users can now become part of the virtual scene while keeping visual contact in full 3D. These features make the system a powerful tool for high-end remote collaboration and presentation. blue-c is currently implemented via two portals with complementary characteristics, networked with a gigabit connection. The portals connect the ETH Computing Center downtown Zurich with the second campus outside of Zurich. Various applications prove the concept and demonstrate its usefulness.

We devised a novel 3D video format and a specialized processing pipeline tailored to our needs. This technology is based on 3D video fragments using irregular point samples as 3D video primitives. This technique combines the simplicity of 2D video processing with the power of 3D video representations. It allows for highly effective encoding, progressive transmission, and supports a multitude of visual effects. Moreover, a 3D video recorder was built, resulting into a system capable of recording, processing, and playing three-dimensional video from multiple points of view. 3D video playback enhances traditional 2D video interaction, like pause, fast-forward and, slow motion, with novel 3D video effects such as freeze-and-rotate and arbitrary scaling. A new network communication architecture for the blue-c has been designed. It offers various services for managing the nodes of the distributed blue-c system and implements communication channels for real-time streaming of 3D video, audio, and synchronization data. Finally an API was provided which allows programmers to readily use specific hard- and software features of our system for rapid application development.

GPU-Based Ray-Casting of Quadratic Surfaces

Christian Sigg

Tim Weyrich

Mario Botsch

http://graphics.ethz.ch/people/archive/siggc/

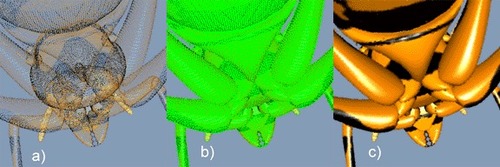

Quadratic surfaces are frequently used primitives in geometric modeling and scientific visualization, such as rendering of tensor fields, particles, and molecular structures. While high visual quality can be achieved using sophisticated ray tracing techniques, interactive applications typically use either coarsely tessellated polygonal approximations or pre-rendered depth sprites, thereby trading off visual quality and perspective correctness for higher rendering performance. In contrast, we propose an efficient rendering technique for quadric primitives based on GPU-accelerated splatting. While providing similar performance as point-sprites, our methods provides perspective correctness and superior visual quality using per-pixel ray-casting.

Behavior Modeling for Game Agents

Christoph Niederberger (niederberger

inf.ethz.ch)

inf.ethz.ch)

http://graphics.ethz.ch/creaZoo/

Recent academic research has begun to look at computer games not only as entertainment issue but also as an ideal testbed for behavior modeling and simulation. Game environments are highly dynamic, should provide real-time responses, and require human interaction in order to meet the user's requirements. These properties make them ideal for the simulation of agents with natural (and maybe human) characteristics.

The goal of this project is to model such agents in a easy way in order to have the control over the character's global behavior but also to loosen the determinism in games where opponents often behave in the same way every time the game is played. But the opponents behavior can also influence the story plot of the game which therefore also becomes dynamic.

Additionally, the integration of goal-oriented behavior into the basic reactive behavior is not a trivial task in real-time environments such as games since the search for the correct sequence of actions to reach the goal state is computationally very expensive.

A Communication Architecture for Highly Immersive Collaborative Virtual Environments

Edouard Lamboray (lamboray

inf.ethz.ch)

inf.ethz.ch)

http://blue-c.ethz.ch

The goal of this research is the design and implementation of a communication architecture for a tele-immersive and collaborative virtual reality system. Platform independence and scalability from high-end to low-end systems should be achieved through the use of a powerful communication middleware and an advanced programming model, including Quality of Service features. The communication layer must support different end-applications and will dynamically adapt to feedback information from both application and communication network. It will support the transmission of high-quality geometry-enhanced video representations of human users, as well as audio data, and the information resulting from the users' interaction with the shared virtual environment.

Software Integration of Virtual Worlds, Live Media-Streams and Collaboration

Martin Näf (naef

inf.ethz.ch)

inf.ethz.ch)

http://blue-c.ethz.ch

This dissertation project includes the application programming interface (API) for the blue-c project, a collaborative immersive virtual reality environment. It concentrates on the integration aspect of the different media and data types, namely geometry with attributes (scene graph), live video and audio streams and realistic, spatialized sound rendering. Special care is taken to explain the reasoning behind design decisions and tool selection.

Real-Time Reconstruction and Rendering of Real-World Objects

Stephan Würmlin (wuermlin

inf.ethz.ch)

inf.ethz.ch)

http://blue-c.ethz.ch

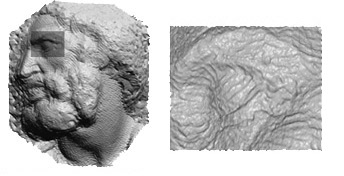

The goal of this thesis is the design and implementation of a graphics engine for acquisition and rendering of real-world objects (e.g. human actors) in virtual environments. We will acquire real-world objects with conventional video equipment and produce three-dimensional representations of these objects from which we can render new views. We envision a system where dynamic objects can be displayed at remote locations, hence the graphics engine has to perform in real time. To achieve this goal, we will reconstruct and render the object in a progressive, point-based manner. The rendered object is then composited into a conventional three-dimensional geometry-based scene. We will integrate and test this real-time graphics engine in a networked virtual environment, the blue-c. This advanced system combines immersive projection and video acquisition. Connecting multiple blue-c sites allows for collaborative work of remotely located users.

The Light Field Oracle - An Oracle for Data Acquisition of Light Fields

Reto Lütolf (luetolf

inf.ethz.ch)

inf.ethz.ch)

http://graphics.ethz.ch/lfo

Image based rendering (IBR) has received a lot of attention since Light Field Rendering and The Lumigraph have been presented in 1996. The beauty of IBR lies in its power to represent scenes with rich and complex geometric detail. Hence, much of the follow-up work suggested various ways and tools to reconstruct efficiently approximations of the underlying Plenoptic function - mostly from a set of dense scene samples and sometimes in combination with rough scene geometry. As a result, the initial concepts have been improved significantly in terms of storage efficiency and reconstruction quality.

In spite of a few exceptions, however, relatively little work has been devoted to the development of fast and easy-to-handle data acquisition schemes for light field rendering systems. The light field oracle presents a novel mathematical concept for progressive, hierarchical light field acquisition, representation, processing and compression. The representation combines conventional wavelet transforms with hierarchical scattered data interpolation and works with any orthogonal wavelet and any interpolation filter kernel. In addition, a set of local decomposition and reconstruction operators have been designed which greatly reduce the computational costs of incremental updates and rendering queries.

Analysis and Visualization of Features in Turbomachinery Fluid Flow

Martin Roth (roth

acm.org)

acm.org)

Our field of application, CFD simulations of hydraulic turbomachinery, is particularly demanding. Flows in water turbines and pumps have fully developed turbulence, are constrained in curved channels of complex geometry and exhibit large downstream pressure gradients and high shear. In addition, commonly used grids have some properties usually not supported in general-purpose visualization packages, such as multi-block structured grids, rotational symmetry and zones moving relative to each other (e.g. stator and runner). The machines are highly optimized, with efficiencies exceeding 93%. Industrial simulations usually solve for steady (time-averaged) solutions of the Navier-Stokes equations. Typical vortices in such flows are weak, yet these vortices are of particular interest to the engineers developing these turbomachines. Extracting vortices is thus the most important step of a feature-based visualization for this task.

ARTiST: A Real-Time Surgery Trainer

Daniel Bielser

The aim of this project is to construct a framework for invasive surgery simulation which enables its users to practise surgery tasks with different surgical instruments. In particular we like to allow unrestricted cut tasks with a virtual scalpel, e.g. the resection of a tumor. All system parts are based on physical models in order to make the simulation close to reality. The virtual surgical tools themselves are attached to a force-feedback device for a convincing haptic representation of the surgical manipulations. The human limbs are based on the visible human dataset and will consist of different tissue types with different physical behavior. Especially the bone structures will be modeled as hard impenetrable parts.

Non-Manifold Fairing

Andreas Hubeli

http://graphics.ethz.ch/research/past_projects/nemesi

The Nemesi project aims at enabling the interaction with complex datasets. In particular, the application focuses on the representation of and the interaction with non-manifold models, which can be interpreted as a collection of self-intersecting surfaces. An application handling such data is faced with two important challenges: the models are geometrically and topologically very complex.

Researchers in the computer graphics community have attacked the first problem by constructing advanced multiresolution representations, thus enabling users to interact with very detailed surfaces. Additionally, the construction of multiresolution editing tools enabled the construction of efficient modeling frameworks.

However, we note that most of the research has been focused on two-manifold surfaces, thus neglecting the topological information of the input data. Therefore, these operators can potentially change the topology of the data which result in a loss of information. The Nemesi project attack this second problem by explicitly modeling non-manifolds and by constructing a collection of non-manifold operator

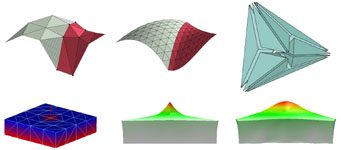

Face Surgery Simulation Using Finite Element Modeling

Martin Roth

http://graphics.ethz.ch/face

Computer assisted planning of cranio-maxillofacial surgical procedures aims at the prediction of changes on the facial surface prior to actual surgery. In order to model the facial tissue we base on the theory of static elastomechanics and use the finite element method to solve the inherent partial differential equations.

For topological and geometric reasons, we choose the representation of tissue as a tetrahedral mesh. This necessitates the definition of a finite element over the tetrahedral domain. The project aims at the construction of a novel finite element based on Bernstein polynomials. Special attention is paid to the construction of C1-continuous transitions across element boundaries. To this aim, the theory of Bernstein-Bézier interpolants is combined with the finite element method. Finally, the resulting cubic C1 element is compared to C0-continuous linear, quadratic and cubic tetrahedral elements.

Experimental Volume Visualization Environment (EVOLVE)

Lars Lippert

http://graphics.ethz.ch/evolve

The Experimental Volume Visualization Environment ( EVOLVE ) is a volume visualization system that combines the advantages coming along with fourier domain volume rendering and with wavelet domain rendering. It is designed to achieve interactive rendering rates on low cost workstations and for internet applications. The rendering process is based on a fast and accurate calculation of hierarchical splats.

Ivory: Visualization of Multidimensional Data Relations using Physically-Based Models

Thomas Sprenger

http://graphics.ethz.ch/ivory

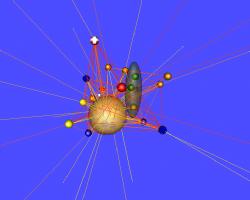

In this project we develop a framework for the visualization and analysis of multidimensional data relations. The system bases on a quantification of the similarity of related objects, wich governs the parameters of a mass-spring system, organized as two concentric spheres. Since the spring stiffnesses correspond to the computed similarity measures, the system converges into an energy minimum, which reveals multidimensional relations and adjacencies in terms of spatial neighborhood. In order to simplify complex setups we provide an additional clustering algorithm for postprocessing.

Interactive Display Bubbles

Daniel Cotting

http://graphics.ethz.ch/bubbles

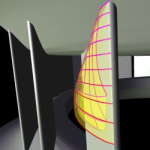

Computer technology is increasingly migrating from traditional desktops to novel forms of ubiquitous displays on tabletops and walls of our environments. This process is mainly driven by the inherent limitations of classical computer and home entertainment screens, which are generally restricted in configuration and interaction possibilities. Due to these limitations, users are required to adapt to given setups, instead of the display systems continuously accommodating the users needs. Based on this observation, we have developed techniques for a fully interactive, projector-equipped visual workspace which provides flexible global control of the projected light at any location of the environment. In an intuitive way and with a maximum degree of flexibility, on-demand displays can be instantiated on any surface within this space. Subsequently, by scanning the environment, the displays can dynamically adapt to objects and persons in a smart way.

Point-Based Geometry Processing

Mark Pauly

Sathya Krishnamurthy

http://graphics.ethz.ch/pointbasedgeometry

In this project we investigate new methods for processing point-based geometric models. Instead of using traditional polygonal mesh representations, we represent 3D shapes using discrete sets of unconnected points. Our aim is to devise new signal processing algorithms for filtering, resampling and compression of point-based models. Furthermore we want to study physically based deformation and dynamics for point-based objects that can be integrated into a general modeling and animation framework.

Surfels

Matthias Zwicker

http://graphics.ethz.ch/surfels

The goal of this thesis is to explore the use of surface elements, or surfels, as rendering primitives. A surfel is a point sample of an object surface that comprises geometric attributes such as position and normal as well as photometric attributes such as a diffuse color. We want to use our technique to directly render scanned objects and other highly complex 3D models. Our research focuses on strategies for discretizing synthetic graphics objects into point-based representations, storing them efficiently and rendering high quality images. We are particularly interested in the aliasing problem arising when generating raster images from point-based objects. We also investigate the generalization of point-based rendering to include surface and volumetric data.

3D Video

Stephan Würmlin

Michael Waschbüsch

Edouard Lamboray

http://graphics.ethz.ch/3dvideo

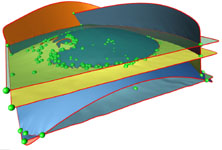

The 3D Video project aims at developing novel algorithms for extracting and representing 3D data obtained from dynamic real-world scenes. We investigate the complete 3D Video pipeline including data acquisition, 3D reconstruction, compression and rendering for real-time and off-line systems.